Six sigma is by no means new. Motorola are widely acknowledged as the pioneers of the methodology and they introduced it over twenty years ago. The tools and philosophies behind it date back even further than that, some being more than fifty years old. It is therefore more accurate to describe six sigma as a natural evolution of continuous improvement and quality initiatives, rather than a radical new approach. It combines the best components of earlier initiatives into one structured tool. Therefore, to fully understand six sigma, it is necessary to look at where it has come from and how it has evolved. This article will consider some of the influential thinkers and trends that have made six sigma what it is today.

The History of Six Sigma

Interchangeable Parts

Honoré Blanc was a Parisian gunsmith who, in 1790, revealed a manufacturing system that astonished his peers. He had managed to produce interchangeable parts that were similar enough to allow for random selection of parts during the assembly process. At a public demonstration, Blanc assembled functional guns from components located in bins. He realized the potential of his system to reduce the cost of making and repairing guns and to provide work for “unskilled vagabonds” (ALDER, 1997). He also hoped it would lead to further innovations in manufacturing. Unknown to him, Blanc’s method was one of the earliest attempts to reduce variation in a process and can therefore be considered a direct ancestor of six sigma. Two hundred years later, Blanc’s system of ‘bins’ for part selection is still widely used in industry, albeit modified slightly to the two bin replenishment system!

In the 19th century, manufacturing built upon the foundations laid by Blanc. During this period, quality was concerned with finding ways to objectively verify if a part matched the original design. In order to ensure dimensional consistency, go-gauges were introduced to verify the minimum dimension of a part. It was another 30 years before no-go gauges were first used, to verify the maximum dimension (FOLARON, 2003).

Flow Production

In 1913, at Highland Park, Henry Ford became the first person to fully integrate a production process. He successfully combined interchangeable parts with standard work and moving conveyance, in what became known as ‘flow production’. It is a well known fact that Ford was the first to use a moving assembly line, but its introduction made the requirement for consistent parts even more acute. The fast cycle times meant that verifying every component with gauges was not practical. Therefore, methods to monitor the stability of processes and sampling became necessary.

Walter Shewhart

One such method used to monitor process consistency was the control chart, which was invented by Walter Shewhart in 1924. The control chart helped change the role of the quality inspector from one of sorting through parts to identify defects, to one of monitoring processes for stability. Early detection of changes in a process allowed easier identification of the causes and more appropriate corrective actions to be implemented. The result was an improvement in product quality.

Shewhart’s work is widely regarded as the foundation upon which TQM was built. He believed that information was critical if efforts to control and manage production processes were to be successful. As a result he developed statistical process control methods to aid managers in decision making.

During the 1920s and 1930s, production volumes became larger and more complex increasing the need for more sophisticated quality assurance and control. Large quality control departments became common. However, as the size of quality departments increased, the responsibility for quality shifted away from the operators.

As a result of the Second World War, the American economy boomed. Manufacturers enjoyed great demand for their products and had no need to rely on quality as a source of competitive advantage. The costs associated with scrap, rework and inspection were absorbed by the affluent consumers. During this time however, the Japanese economy was in ruins. Faced with the daunting task of rebuilding it, the Japanese drew on the quality teachings of American gurus such as W. Edwards Deming and J. M. Juran. The Japanese understood the potential benefits and implemented quality improvement processes, unlike their American counterparts who largely misunderstood the economics behind the principles.

Dr. W. Edwards Deming

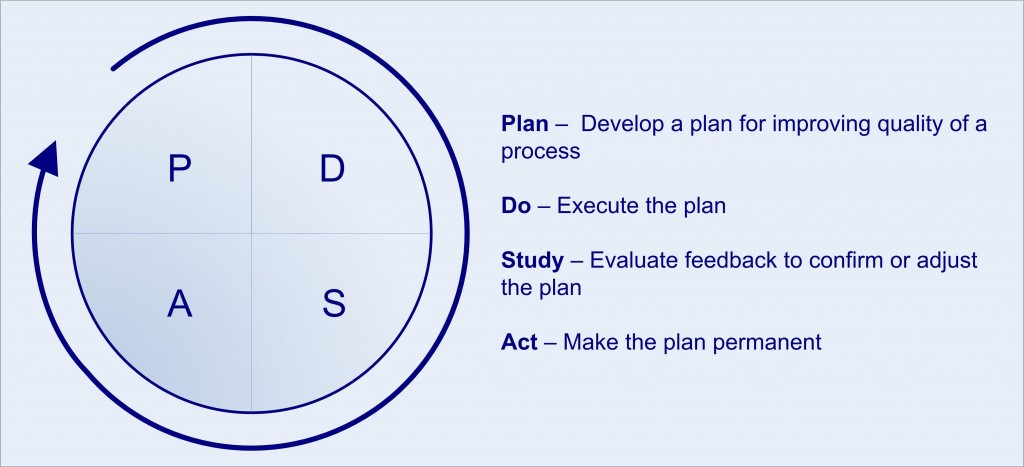

Dr. W. Edwards Deming was a friend of Walter Shewhart, who went to Japan in 1950 to teach statistics and U.S. quality methods. His teachings had a large role to play in helping Japanese manufacturers become a major presence in the global market. Deming stressed the importance of analysing data against statistically computed limits as a way of quantifying variation. This information could then be used to improve future performance. In order to promote continuous improvement, Deming contributed the PDSA Cycle, shown below. Based on the Shewart cycle (Plan, Do, Check, Act); the Deming Cycle (Plan, Do, Study, Act) is the basis of six sigma’s DMAIC cycle.

The Deming Cycle

Joseph Juran

In 1954, another American, Joseph M. Juran was invited to Japan. His philosophy was similar to Deming’s but was more evolutionary, in that it involved adapting the existing management system rather than implementing an entirely new one. In many ways Juran and Deming were very similar. They both believed that the main problems in quality were a result of cultural resistance, not the worker. Neither man endorsed the use of slogans/banners, and both knew that ultimate responsibility for quality lay with senior management. However, unlike Deming, Juran believed that statistics was not the complete remedy for quality problems. He is widely acknowledged as being the first person to bring the human dimension into quality, widening the field from a narrow statistical perspective to one that includes management. Juran also promoted the belief that quality data needs to be presented in financial terms to be understood by managers.

Other important contributions include the application of the 80-20 rule to quality, stating that 80% of problems come from 20% of causes and management should concentrate on that 20% ‘vital few’. Juran based this on the work of 19th Century economist Vilfredo Pareto and renamed the principle after him (JURAN, 1975).

Another important concept proposed by Juran is the idea that the customer is not just the end customer and that each person along the chain has an internal customer, and as such is supplier to the next in the chain. In 1951 he published his “Quality Control Handbook”.

Philip Crobsy

One other person who was writing about quality management at this time was Philip Crosby. His philosophy differed significantly from both Deming’s and Juran’s. It revolved around the concept of zero defects which stemmed from the U.S. space programme. He promoted the view that doing things right the first time will always be cheaper than trying to fix defects, and therefore quality is free. According to Crosby, many organisations do not realise the high costs of poor quality.

Crosby produced a fourteen point approach to quality improvement, which initially seemed like a recipe for success. However, with many managers delegating quality tasks and the target of zero defects difficult to reach, Crosby’s approach was dismissed as unrealistic.

“If Japan Can, Why Can’t We?”

By the 1970s, American industries were trailing behind their Japanese rivals. Japanese products were cheaper and more reliable than American equivalents and were attracting customers despite deeply rooted patriotic loyalties. This combined with the two oil crises resulted in a huge wake up call for American industries. They were forced into adopting quality control philosophies as a reactive measure.

On 24th June 1980, NBC aired a documentary entitled “If Japan Can, Why Can’t We?”. It featured the Nashua Corporation, a U.S. Company that had significantly improved its results by using the theories of Deming. This was the start of the American quality revolution with many people, including Ford’s entire top management team, visiting the spotlighted company.

The renewed interest in Deming’s work prompted him to help American managers better understand the concepts of variation and the value of using statistical methods. During his seminars he used graphic displays such as the red bead experiment and easy to understand key points such as his seven deadly diseases to get over his message. All of these ideas were collated in his books “Out of the Crisis” and “Quality, Productivity, and Competitive Position” which were published in the early 1980s. In these books, Deming set out 14 points which he believed could save the U.S. from the threat of Japanese competitors. Many of the points are reflected in key six sigma principles, such as the importance of training (point 6), the importance of leadership (point 7), and interdepartmental teams (point 9).

Other Six Sigma Influencers

Armand Feigenbaum

During the latter half of the twentieth century, many other influential thinkers had an impact on quality. One such person was Armand Feigenbaum who developed the concepts of total quality control, customer-defined quality, and cost of nonconformance. In 1956, he became the first to use the term ‘total quality control’. In the November/December 1956 issue of the Harvard Business Review he stated that “the way out of the dilemma imposed on businessmen by increasingly demanding customers and by ever-spiraling costs of quality … was a new kind of quality control, which might be called total quality control”.

In his article “Total Quality Management & the Contributions of A. V. Feigenbaum”, Lawrence Huggins (1998) describes three of Feigenbaum’s major contributions to quality management.

Firstly, Feigenbaum correctly recognised that managers are concerned with furthering their careers through favourable performance. To do this meant increasing profitability within their department and one significant way to achieve that was to lower costs. Feigenbaum, who worked at General Electric (GE) between 1937 and 1968, showed his peers how it was possible to reduce costs by using quality controls with a number of internal corporate publications. His work in the area was the first to categorise quality costs into prevention, appraisal, and internal and external failure. The Cost of Poor Quality (COPQ) has become the initial financial analysis conducted for a six sigma project.

Secondly, Feigenbaum focused his efforts on improving the design function. He reasoned that prevention of quality defects started with the engineering designs of products. This is because the design will specify the material and therefore the machines and processes required to produce a product. Placing emphasis on quality at such an early stage, particularly designing to meet the needs of the customer, is now embodied in the concept of Design For Six Sigma (DFSS).

Finally, Feigenbaum also proposed the establishment of a permanent Design Review Board in each product department. This Board was to be composed of a Systems Project Engineer, the responsible Design Engineer, a Product Service Representative, a Reliability Engineer and a Quality Review Representative. In the crafting of this team concept, Feigenbaum paved the way for today’s interdepartmental six sigma teams.

Kaoru Ishikawa

Another influential thinker is Kaoru Ishikawa, who is best known for the cause and effect diagram. Also known as the Ishikawa or fishbone diagram, it is a powerful tool that can easily be used to analyse and solve problems. He also played a key role in the development of the Japanese quality strategy, which is characterised by involvement in quality, from the top to the bottom of an organization, as well as from the start to the finish of the product life cycle. This all encompassing quality strategy known as company wide quality control (CWQC) is outlined in Ishikawa’s book “What is Total Quality Control? The Japanese Way”. Ishikawa also understood the importance of top management support, which is a key element in CWQC as well as in six sigma. The bottom-up approach of CWQC is evident in the concept of the quality circle, which is a small group of employees from a work area who voluntarily meet at regular intervals to identify, analyse, and resolve work related problems. Ishikawa was a major influence in the growth in popularity of quality circles. He was the chief executive director of Quality Control Circle headquarters at JUSE and also edited JUSE’s two books on the subject, “QC Circle Koryo” and “How to Operate QC Circle Activities”.

Genichi Taguchi

Genichi Taguchi was yet another notable thinker who developed both the loss function and an adaptation of orthogonal arrays to designed experiments. He also advocated the concept of robustness. Taguchi devised an equation to quantify the decline of a customer’s perceived value of a product as its quality declines. This tells managers how much revenue they are losing because of variability in their production process. Taguchi’s loss function shows how monetary loss increases as variation increases.

Taguchi’s Loss Function

In a production process there will undoubtedly be external factors or ‘noise’ which can cause deviations from the target. Isolating these factors to determine their individual effects can be very costly and time consuming. Taguchi devised a way to use orthogonal arrays to isolate these noise factors from all others in a cost effective manner. He went on to observe that some noise factors can be identified, isolated and even eliminated but others cannot. This prompted him to refer to the ability of a process or product to work as intended regardless of uncontrollable noise as robustness. The development of products and processes which perform uniformly regardless of uncontrollable forces has obvious benefits.

Quality Function Deployment

Along with Dr. Shigeru Mizuno, Dr. Yoji Akao is the founder of Quality Function Deployment (QFD). As far back as the 1960s, he was exploring ways to apply powerful Japanese problem solving algorithms to designing products right the first time. Initially using a “fish bone” diagram, his more complex analyses led to a matrix to identify the design elements which would impact customer satisfaction the greatest. This has become a key tool in the six sigma toolbox.

Shigeo Shingo

Shigeo Shingo was one of the world’s leading experts on manufacturing practices and The Toyota Production System (TPS). He is famous for his concepts of Poka-yoke (mistake-proofing) and single-minute exchange of dies (SMED).

TQM was a popular management philosophy in the latter part of the twentieth century and was defined by Feigenbaum as:

“an effective system for integrating the quality development, quality maintenance and quality improvement efforts of the various groups in an organization so as to enable production and service at the most economical levels which allow for full customer satisfaction.”

~ FEIGENBAUM, 1986

TQM began with total quality control in the mid-1950s, but evolved into a way of working and thinking that focuses on:

- meeting the needs and expectations of customers;

- including every person in the organisation;

- examining all costs which are related to quality, especially failure costs;

- getting things ‘right first time’, i.e. designing quality in rather than inspecting it in;

- developing the systems and procedures which support quality and improvement;

- developing a continuous process of improvement.

(SLACK et al., 2001)

From this description it can be seen how TQM is a logical progression in the field of quality management, building on much of what has been mentioned previously. Despite the large scale adoption of TQM in the late 1980s and early 1990s, the movement did not endure. It peaked in 1993 (PONZI & KOENIG, 2002) and thereafter lost momentum as managers became disillusioned. The main reasons for the disappointment were poor economic returns compounded by inflated expectations, poor implementation, poor understanding and too much focus on awards (HENDRICKS & SINGHAL, 1999). One such award was the Malcolm Baldrige National Quality Award (MBNQA).

Malcolm Baldrige National Quality Award

Malcolm Baldrige was the Secretary of Commerce between 1981 and 1987 (NATIONAL INSTITUTE OF STANDARDS & TECHNOLOGY, 2007). He was an advocate of quality management and worked to implement the Quality Improvement Act in America. In honour of his work, Congress named the award after him when they introduced it in 1987. The MBNQA is presented annually by the President of the United States to businesses that have shown outstanding performance in seven key areas:

- Leadership

- Strategic planning

- Customer & market focus

- Information & analysis

- Human resource focus

- Process management

- Business results

The award was established to increase the awareness of the importance of quality and performance excellence as a source of competitive advantage. The assessment criteria for the award focuses on two goals: delivering ever improving value to customers and improving overall organisational performance, both of which are fundamental to the six sigma methodology. A report produced by the Council on Competitiveness (1995) concluded that:

“More than any other program, the Baldrige Quality Award is responsible for making quality a national priority and disseminating best practices across the United States.”

The award is similar to Deming’s, which was introduced in Japan by JUSE in 1951.

Motorola

In 1988 Motorola became the first ever recipient of the MBNQA. At the time, they were among the US companies facing increasing competitive pressure from Japan, and as a result became the first company to implement a six sigma initiative. In 1986, in response to increasing warranty claims, senior engineer Bill Smith introduced the concept of six sigma, as a way to standardise defect measurement. CEO Bob Galvin saw the potential of the method and adopted six sigma as a core business strategy. Motorola underwent a massive cultural change so that they were focused on meeting the quality standards of customers. They formulated a methodology to ensure consistency that involved documenting key processes, aligning the processes to customer requirements and implementing measurement systems to continuously improve the process. Since its introduction in the mid 1980s, Motorola have attributed savings of over sixteen billion US dollars to six sigma projects (HEURING, 2004).

Allied Signal & General Electric

In order to achieve its goal of increasing awareness of the importance of quality, recipients of the MBNQA are requested to share details of their quality practices with others. Motorola obliged, explaining their approach, which was soon adopted by others including AlliedSignal in 1991. Allied’s CEO, Larry Bossidy demonstrated the business potential of six sigma by effectively turning around his company. In 1995 they had saved $175 million and in 1996, nearly twice as much again. Around this time, Bossidy introduced Jack Welch, CEO of GE to the concept of six sigma. Welch made the methodology a corporate requirement and firmly deployed it throughout his organisation with great intensity. His results are well documented:

“We plunged into Six Sigma with a company-consuming vengeance just over three years ago. We have invested more than a billion dollars in the effort, and the financial returns have now entered the exponential phase — more than three quarters of a billion dollars in savings beyond our investment in 1998, with a billion and a half in sight for 1999.”

~ GENERAL ELECTRIC COMPANY, 1998

Modern day six sigma

Many companies now consider six sigma an important part of their strategy. They have invested heavily in six sigma training and created roles in their organisational hierarchy. Often these six sigma jobs are well compensated due to the required skill set from a candidate.

Six sigma black belts are project managers that lead DMAIC projects to fix problems in existing business processes. They often have a six sigma certification based around number of projects completed, verified financial savings and number of employees trained. Sometimes they go on to study lean manufacturing to and become a lean six sigma black belt.

Black belts are responsible for delivering a six sigma course to train green belts who will work for them on their projects. During green belt training the black belt will explain how to use six sigma tools. Often this is done in parallel with an actual project so that green belts get a chance to apply what they learn. Black belts become ambassadors for six sigma in the company and an integral part of developing employee capability.

The entry level of six sigma training in a company is often an overview or “sheep dip” that everybody goes through to familiarise them with the language. Employees with this level of training are sometimes called a six sigma yellow belt.