Welcome to the third post in our six sigma tools mini series. We’ve already looked at the tools used in the define phase and measure phase, so check those out if you haven’t already. In this third post we’re going to be looking at the tools used in the analyse phase.

The analyse phase in six sigma is where the project team take a detailed look at the data they collected in the measure phase. The analyse phase deliverables are to identify the vital few inputs and process variables they need to adjust to properly control the outputs of the process. Once this relationship is understood they can start applying that knowledge to reducing variation in the outputs during the improve phase.

Lets have a look at some of the tools used in the analyse phase of DMAIC:

Pareto Charts

Pareto charts are bar charts in which the horizontal axis is split into categories such as defects. The chart then shows the frequency of each defect occurring in descending order of magnitude. Often a line graph is superimposed to show the cumulative frequency. The benefit of using a Pareto chart is that it clearly displays where to focus improvement efforts by separating the few problems of high importance from the many problems with low importance.

Pareto Chart

5 Whys

5 Whys is a tool that is used to find systematic causes of a problem so that an appropriate corrective action can be implemented. It simply involves asking why at least five times until a root cause is established. It requires taking the answer to the first why and asking why that occurs (LIKER, 2004). An example is shown below:

Eric Ries, entrepreneur-in-residence at Harvard Business School, explains how to find the human causes of technical problems.

Scatter Graphs

A scatter diagram is a graphical representation of the relationship between two parameters, typically an input and an output variable, in a process. In some cases the points will be scattered all over the graph with no visible trends. This data shows no correlation, meaning that

no relationship exists. Otherwise a line of best fit is plotted to show what type of relationship exists between the two parameters:

- data sloping from the bottom left to the top right indicates positive correlation (as one variable increases, so does the other).

- data sloping from the top left to the bottom right indicates negative correlation (as one variable increases, the other decreases).

- data that displays a visible trend that is not linear usually indicates some other factor at work that interacts with one of the other factors. Multiple Regression or Design of Experiments should be used to discover the source of these patterns.

(GEORGE et al., 2005)

However, correlation does not always imply causality. Just because two factors display a relationship does not prove that one variable is causing the other. It is possible for a ‘lurking variable’ that was not included in the analysis to be the underlying cause.

Scatter Graph

Ishikawa Diagrams

Ishikawa diagrams are so named after their inventor Kaoru Ishikawa. They are also commonly called cause and effect or ‘fishbone’ diagrams. The line along the centre represents the problem with all the possible causes branching off it. The advantage of using this tool is that causes are arranged according to their level of importance. The diagram shown in figure 414 is a typical manufacturing example. In the case of a service process, the four main categories used are usually equipment, policies, procedures and people.

Ishikawa or Fishbone Diagram

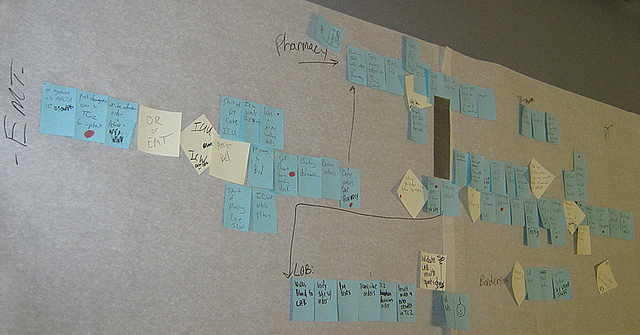

Non-Value Added (NVA) Analysis

A tool used to distinguish the process steps that add value to the product compared to those that do not. A value added step is one for which customers are willing to pay. Its objective is to identify and eliminate the steps in which no value is added. This reduces hidden costs, process cycle times and process complexity, which in turn leads to a reduced risk of an error occurring.

t-Test

A t-test is used to test whether a statistical parameter such as the mean is significantly different to another value. For example a one-sample t-test could analyse if the mean diameter of a sample of gears is significantly different to the target value. A two-sample t-test could analyse if the mean diameter of gears from one supplier is significantly different to that from a second supplier. In the example, the null hypothesis would be that the samples from each supplier do not differ significantly. The alternative hypothesis would be that they do differ significantly. The results show that the calculated value for t is greater than the tabulated value for twenty degrees of freedom at the 5% level and therefore suggest that the two samples differ significantly.

Chi-Squared Test

A chi-squared test is used to test the validity of a hypothesis when both the input variable and output variable are discrete. An example would be “does the operator affect whether the product passes on the test rig?” In this case the null hypothesis would be that the two variables are independent and therefore the choice of operator makes no difference to the test rig outcome. The alternative hypothesis is that the choice of operator has an effect on whether or not the unit passes or fails on the test rig.

ANOVA

Analysis of variance, or ANOVA for short, is used to compare three or more samples to see if their means differ significantly. To do this, a one way ANOVA considers three sources of variability, total, between and within. Total refers to the total variability between all observations. Between refers to the variation between subgroup means and within refers to the random variation within each subgroup or ‘noise’ (GEORGE et al., 2005).

A one-way ANOVA, involves just one factor and tests whether the mean result of any alternative is significantly different. An example would be the fuel consumption of three different cars. A two-way ANOVA uses the same principles as a one-way, but tests how different levels of two factors affect the output variable. An example would be the fuel consumption on the three cars being driven in say two different locations. The two-way ANOVA has the added benefit of showing the impact that the location has on the output variable. It may not be significant, but if one location was much hotter than the other then it is likely the drivers would use the air conditioning system and therefore increase fuel consumption.

Design Of Experiments (DOE)

Design of experiments is used to test and optimise a process. It uses tests of statistical significance, correlation and regression to enable understanding of process behaviour under varying conditions. It differs from empirical observations because rather than just observing, it allows control to be taken of the variables. It is useful for:

- Finding optimal settings.

- Identifying and quantifying the factors that have the biggest impact on the output.

- Identifying factors that do not have a big impact on quality or time (and therefore can be set at the most convenient and/or least costly levels).

- Quickly screening a large number of factors to determine the most important ones.

- Reducing the time and number of experiments needed to test multiple factors.

(GEORGE et al., 2005)

Traditionally, to understand a system, experiments are carried out that test only one variable at a time. This can be time consuming and expensive as many ‘runs’ can be required. DOE is much more economical and efficient:

“DOE takes the art out of experimentation and substitutes science in its place.”

~ HOCKMAN & JENKINS, 1994

The other advantage that DOE has over the one-at-a-time approach is that it will highlight interactions between factors. The basic steps are as follows:

- Identify factors to be evaluated.

- Establish levels of factors to be tested.

- Create an experimental array.

- Conduct the experiment under the prescribed conditions.

- Evaluate the results.

(PANDE et al., 2000)

Taguchi Methods

Taguchi methods are similar to DOE in that they are both tools used to analyse a process that has multiple input variables that affect the output variable. The main difference is in the way they handle interactions between input variables. DOE starts off by assuming all inputs interact with all other inputs, whereas Taguchi Methods assume that some knowledge of the process already exists, which is used to make the experiments more efficient (CESARONE, 2001). By not investigating interactions that are known not to exist, the number of runs can be reduced and therefore results arrived at more quickly.

Another difference is that Taguchi Methods distinguish between factors that are controllable (control factors), and those that are not (noise factors). Due to the reduced number of tests, Taguchi Methods manage to test each combination more than once, typically with different levels of noise factors:

“For example, we might test a particular set of inputs at high temperature and high humidity, high temperature and low humidity, low temperature and high humidity and low temperature and low humidity…to inject maximum variability into experimental designs.”

~ CESARONE, 2001

The reason for doing this is that it not only determines which combination produces the highest output, but also which is the most repeatable or ‘robust’.

Regression Analysis

Regression is used in conjunction with scatter graphs to define a model that links the input variables to the output variable, which can then be used to predict future performance. Linear Regression is used for one input and one output variable. Multiple Regression is used when there is more than one input variable and is particularly useful because it quantifies the impact of each input on the output and shows how they interact.

Summary

The six sigma analyze phase is the third of the five stages of a DMAIC project. This is where a number of statistical analyses are used to identify the variables which consistently control the process. Every project is different of course, but tools typically used during the dmaic analysis phase include linear regression , design of experiments and Pareto analysis.

Once the analyse phase deliverables are complete, the next step is the improve phase!

- Define Phase

- Measure Phase

- Improve Phase

- Control Phase